In my previous post, I stumbled upon a huge performance difference in the mutex-based queue that I was testing in windows vs linux. In particular, the windows version was twice as fast as the linux one. I started investigating it by googling the performance of windows vs linux mutexes.

I did not solve that riddle yet, but what caught my attention was the comments around the windows CreateMutex function. Well, it turns out that CreateMutex is not the right synchronization primitive when working with threads. Strictly speaking, it does work for synchronizing threads but it is far more powerfull than that and you are paying a needlessly high cost if you are using it just for threads.

In the MSDN page of CreateMutex, we read:

Two or more processes can call CreateMutex to create the same named mutex. The first process actually creates the mutex, and subsequent processes with sufficient access rights simply open a handle to the existing mutex. This enables multiple processes to get handles of the same mutex, while relieving the user of the responsibility of ensuring that the creating process is started first.

So, it turns out that a Windows mutex is an inter-process syncronization primitive. The cost you are paying for this luxury, is that locking a windows mutex, requires leaving your process and calling into the windows kernel. This system call can have a pretty steep cost.

The right primitive for synchronizing threads in windows is the CriticalSection. Critical sections also allow fine-grained locking mechanisms. They can for instance be acquired for shared or exclusive mode. This is very usefull if you want to implement a multiple-reader, single-writer lock. They are fast because if there is no lock contention (the usual case), they are implemented entirely in userspace. But there's no reason to over-complicate things for mutual exclusion if you can use std::mutex.

So I set out to compare the performance of a Windows mutex compared to std::mutex. I wrote a small program that spawns N worker threads and allows these threads to concurrently access and increment one integer variable until 10.000.000 is reached.

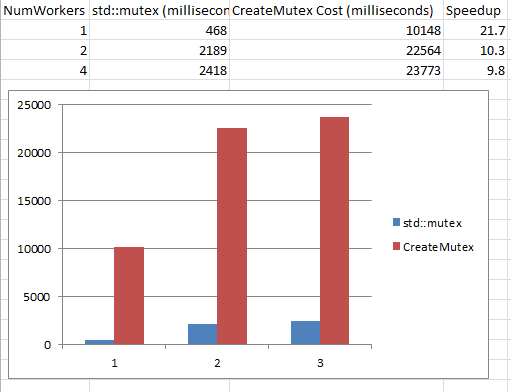

The timing results under Windows 7 / VS2013 / i5 3570 are the following:

The work that each thread iteration has to do is trivial (incrementing an integer), so the dominant factor is the synchronization cost. This cost is increasing as more contention is caused as more workers are added and are competing. Under no contention (1 worker) the difference is a staggering 20x factor. When contention starts to occur, the difference is roughly 10x.

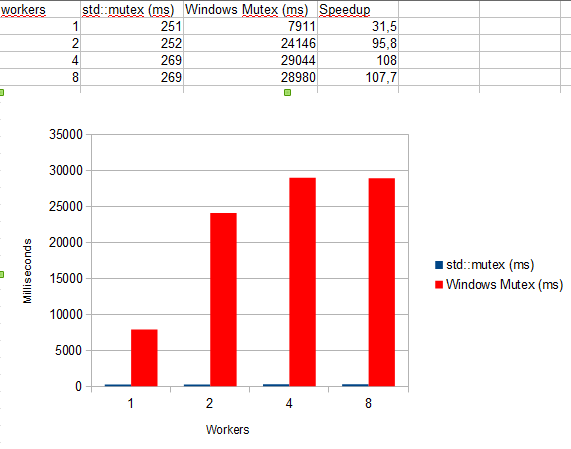

I re-tried the experiment in Windows 10 and Visual Studio 2015, on another i5 3570 box:

The results are even more dramatic. The cost of std::mutex has been cut in half, while the windows mutex remains much slower. The difference under no contention is in the x30 range, while under contention, reaches 2 orders of magnitude.

The code is available if you want to experiment.